Neural Radiance Fields (NeRFs) excel in photorealistically rendering static scenes. However, rendering dynamic, long-duration radiance fields on ubiquitous devices remains challenging, due to data storage and computational constraints. In this paper, we introduce VideoRF, the first approach to enable real-time streaming and rendering of dynamic radiance fields on mobile platforms. At the core is a serialized 2D feature image stream representing the 4D radiance field all in one. We introduce a tailored training scheme directly applied to this 2D domain to impose the temporal and spatial redundancy of the feature image stream. By leveraging the redundancy, we show that the feature image stream can be efficiently compressed by 2D video codecs, which allows us to exploit video hardware accelerators to achieve real-time decoding. On the other hand, based on the feature image stream, we propose a novel rendering pipeline for VideoRF, which has specialized space mappings to query radiance properties efficiently. Paired with a deferred shading model, VideoRF has the capability of real-time rendering on mobile devices thanks to its efficiency. We have developed a real-time interactive player that enables online streaming and rendering of dynamic scenes, offering a seamless and immersive free-viewpoint experience across a range of devices, from desktops to mobile phones.

Overview

Our proposed VideoRF views dynamic radiance field as 2D feature video streams combined with deferred rendering. This technique facilitates hardware video codec and shader-based rendering, enabling smooth high-quality rendering across diverse devices.

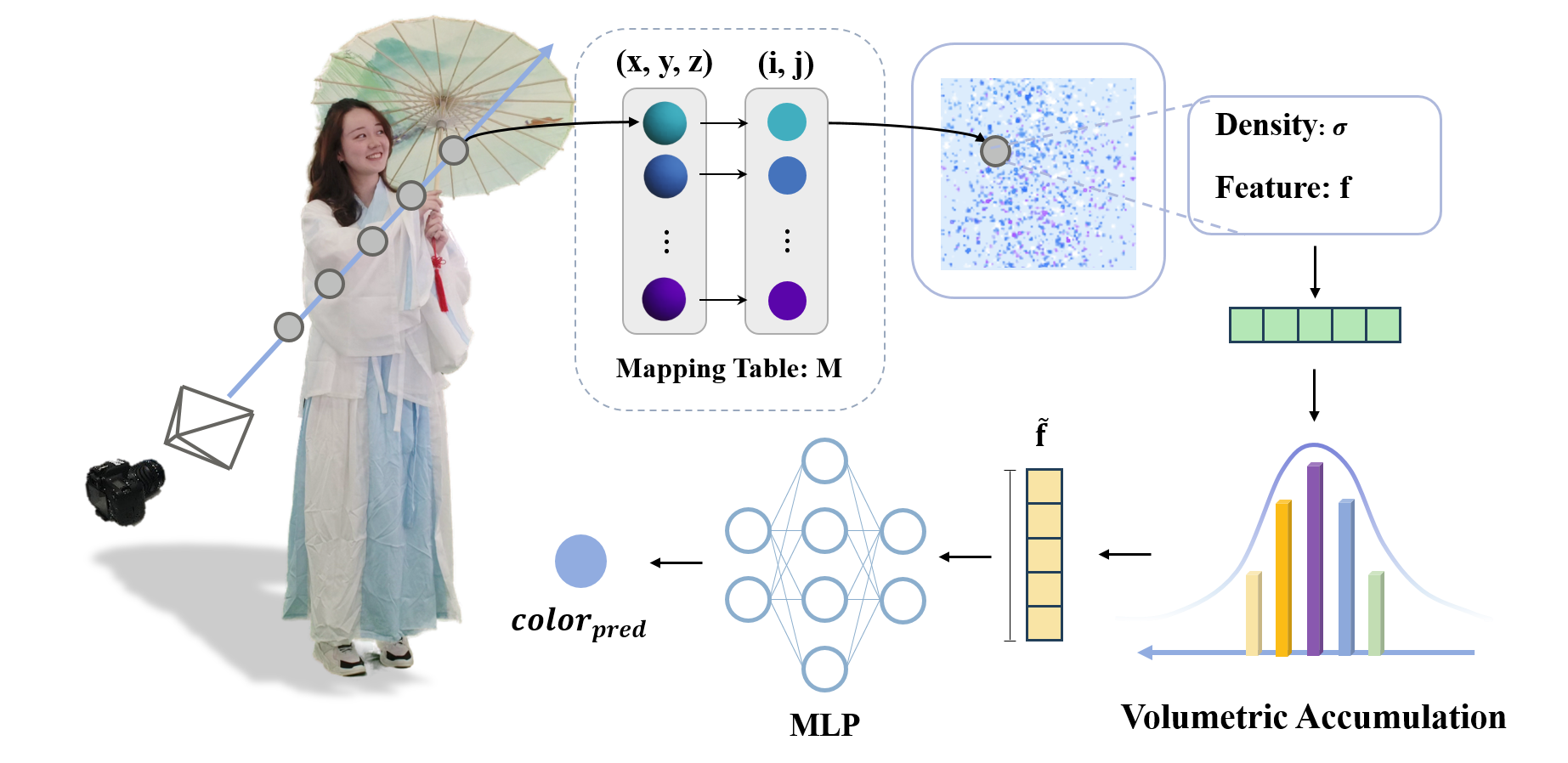

VideoRF Representation

Demonstration of VideoRF representation. For each 3D sample point, its density σ and feature f are fetched from the 2D feature image through the mapping table M. Each point feature is first volumetrically accumulated to get the ray feature ̃f and pass MLP Φ to decode the ray color.

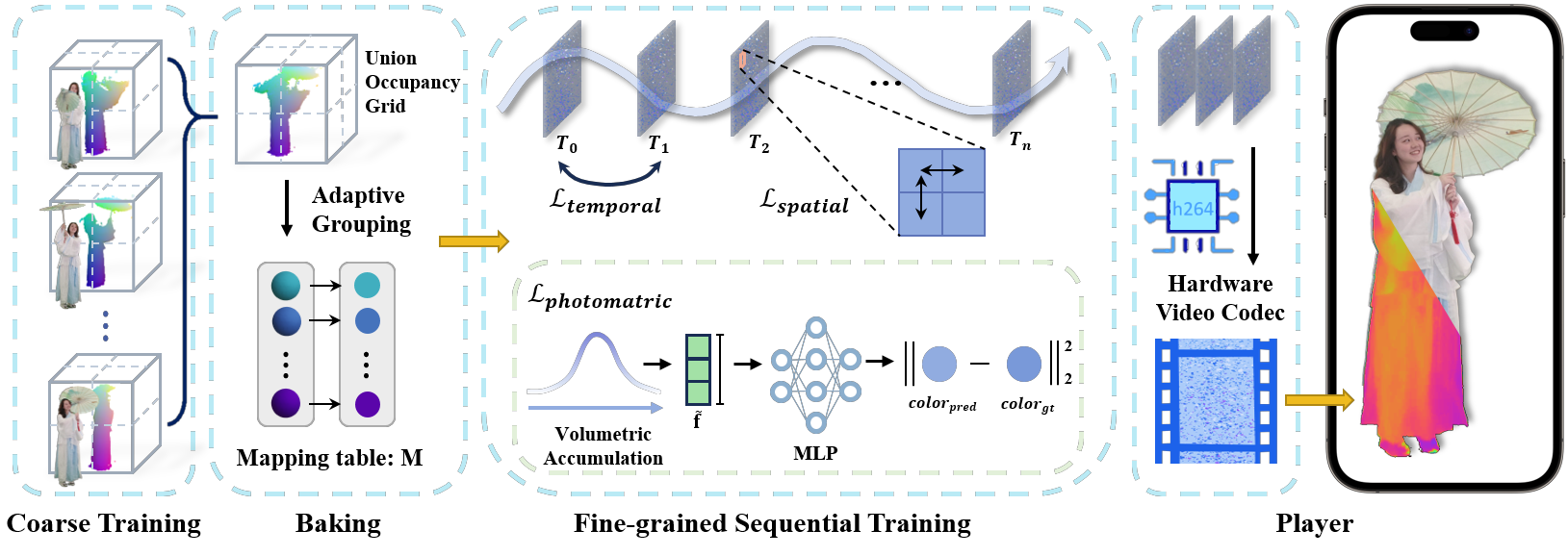

Training Pipeline

Overview of our video codec-friendly training. First, we apply our grid-based coarse training to generate per-frame occupancy grid . Then, during baking, we adaptively group each frame and create a mapping table M for each group. Next, we sequentially train each feature image through our spatial, temporal and photometric loss. Finally, feature images are compressed into the feature video streaming to the player.

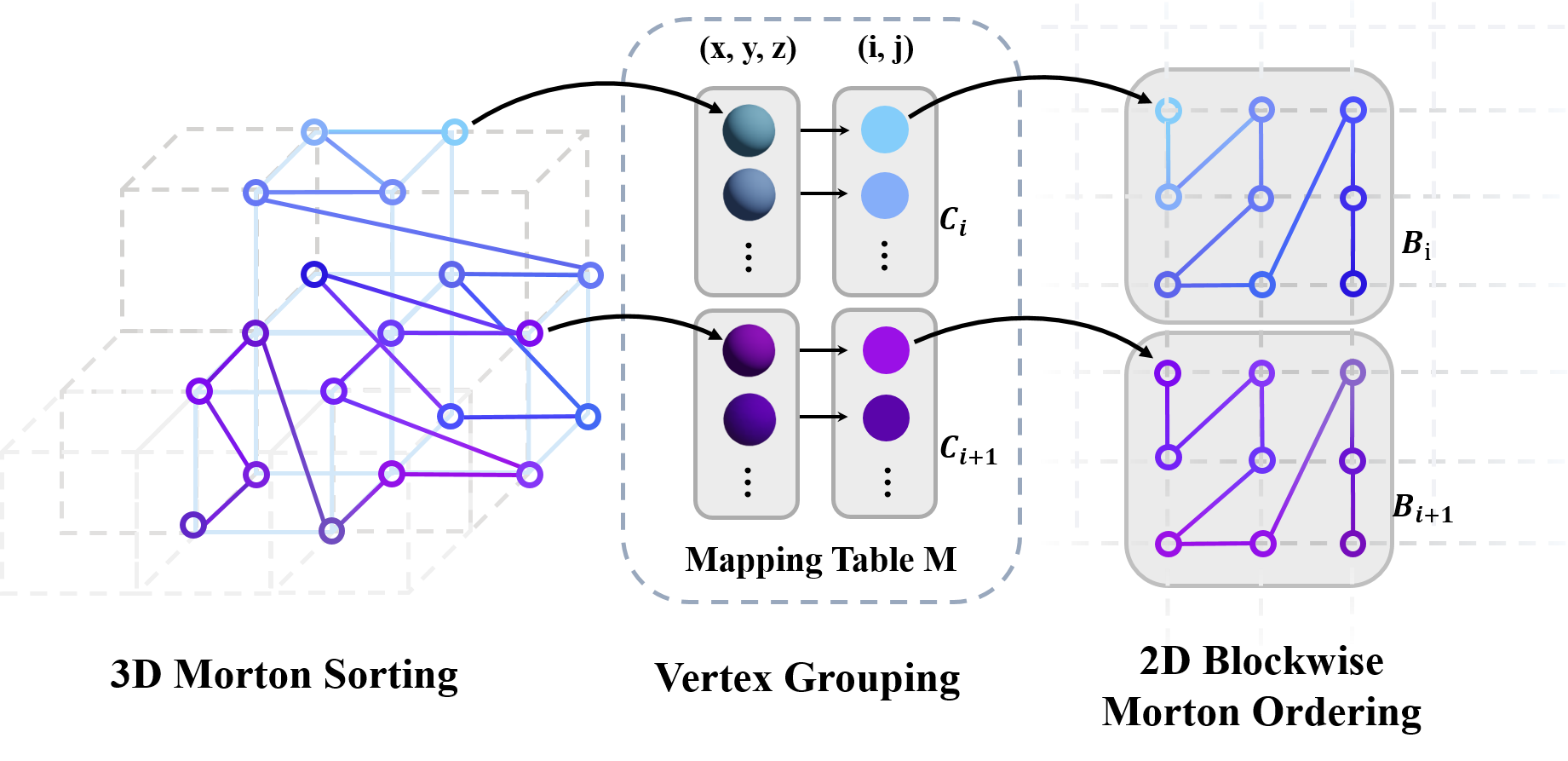

Mapping Table Generation

Illustration of our mapping table generation. We first perform 3D Morton sorting on each nonempty vertice and group it into chunks Ci. Next, we lay out each chunk into each block Bi of the feature image, arranged in 2D Morton order within it.

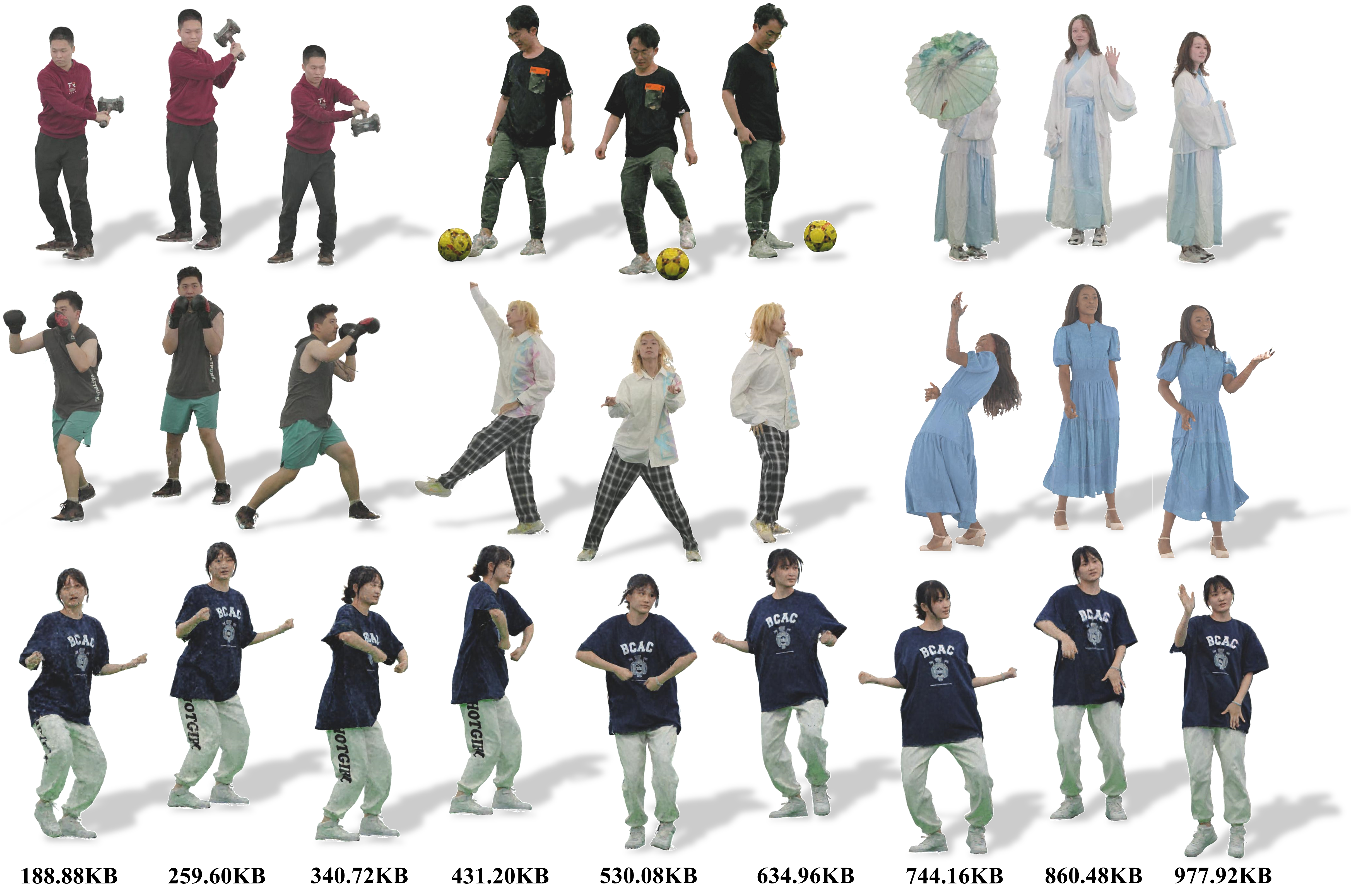

Results

Our VideoRF method generates results for inward-facing, 360◦ video sequences featuring human-object interactions with large motion. The images in the last row illustrate our ability to implement variable bitrate in these sequences.

Bibtex

Qiang Hu and Jingyi Yu and Lan Xu and Minye Wu}, year={2023}, eprint={2312.01407}, archivePrefix={arXiv}, primaryClass={cs.CV} }